Ethical AI in marketing: why bias matters

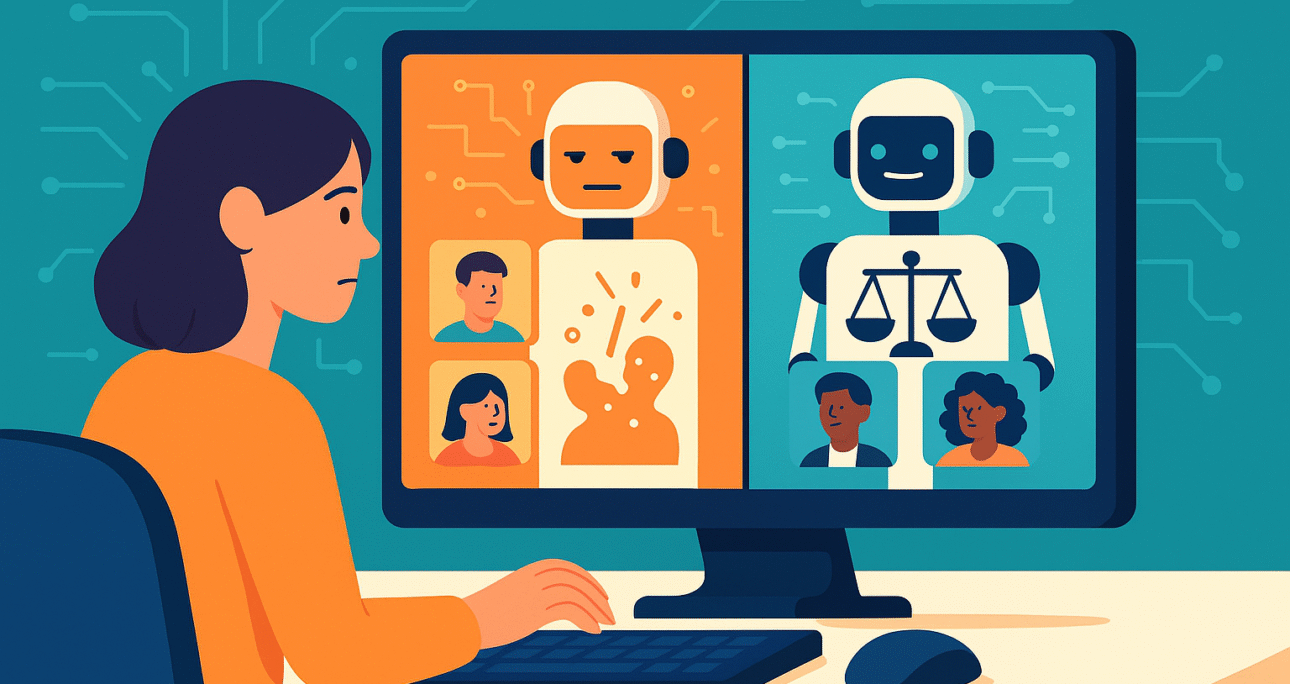

The rise of generative AI has transformed digital marketing, enabling personalized campaigns at unprecedented speed. Yet, ethical AI in marketing has emerged as a pressing concern because large language models (LLMs) can reproduce hidden biases. These biases, often linked to gender, ethnicity, or socioeconomic status, can lead to unfair targeting, misrepresentation, or exclusion of audiences. For brands seeking long-term trust, recognizing and addressing bias is no longer optional.

Detecting bias in large language models

Bias in LLMs appears when algorithms reflect patterns from unbalanced training data. For instance, job-related prompts may return stereotypical roles for men and women, or geographic queries might prioritize certain regions. In marketing, these subtle distortions can shape how ads are distributed and who receives information. Detecting bias involves stress testing models, comparing outputs across demographics, and using fairness metrics to identify gaps.

Strategies to reduce bias in marketing applications

Marketers have several tools to ensure ethical AI practices. One approach is using diverse and representative datasets for training. Another is integrating bias detection frameworks that evaluate model decisions before campaigns go live. Additionally, introducing human oversight helps validate automated outputs, ensuring that personalization aligns with inclusivity. By embedding these safeguards, companies prevent discriminatory outcomes and build credibility with consumers.

Ethical AI as a brand advantage

Beyond compliance, applying ethical AI in marketing creates a competitive edge. Consumers increasingly value transparency and fairness, rewarding brands that prioritize these values. By openly addressing the challenges of bias in LLMs and adopting corrective strategies, businesses not only reduce risks but also position themselves as leaders in responsible innovation.

Source: arXiv